Yani Ioannou, PhD

Assistant Professor & Schulich Research Chair

Dept. of Electrical and Software Engineering

Schulich School of Engineering

University of Calgary

I'm an Assistant Professor and Schulich Research Chair at the University of Calgary in the Department of Electrical and Software Engineering of the Schulich School of Engineering, and lead the Calgary Machine Learning Lab.

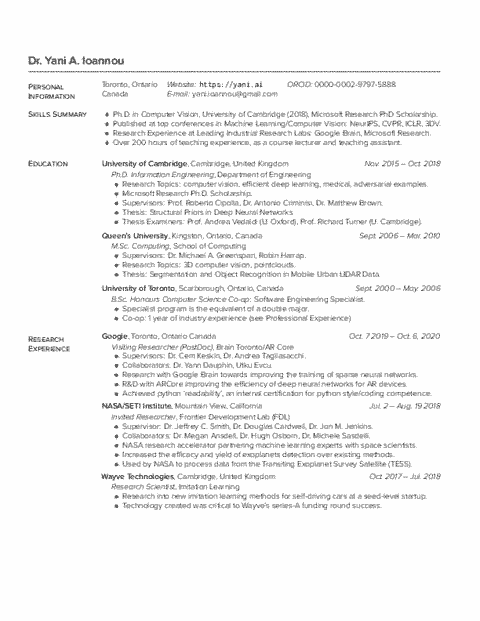

I was previously a Postdoctoral Research Fellow at the Vector Institute and University of Guelph, working with Prof. Graham Taylor, and a Visiting Researcher at Google Brain Toronto/Google AR Core.

I completed my PhD at the University of Cambridge in 2018 supported by a Microsoft Research Ph.D. Scholarship, where I was supervised by Professor Roberto Cipolla and Dr. Antonio Criminisi.

I am interested in efficient deep learning, both for training and inference, sparse neural network training, and trustworthy AI for compressed neural network models. I have in the past worked on exoplanet detection with NASA, medical imaging, and 3D computer vision methods for processing and recognizing objects in large point clouds.